deepconsensus

| Entity Passport | |

| Registry ID | gh-model--google--deepconsensus |

| License | BSD-3-Clause |

| Provider | github |

Cite this model

Academic & Research Attribution

@misc{gh_model__google__deepconsensus,

author = {google},

title = {deepconsensus Model},

year = {2026},

howpublished = {\url{https://github.com/google/deepconsensus}},

note = {Accessed via Free2AITools Knowledge Fortress}

}🔬Technical Deep Dive

Full Specifications [+]▾

Quick Commands

git clone https://github.com/google/deepconsensus ⚖️ Nexus Index V2.0

💬 Index Insight

FNI V2.0 for deepconsensus: Semantic (S:50), Authority (A:0), Popularity (P:60), Recency (R:96), Quality (Q:50).

Verification Authority

🚀 What's Next?

Technical Deep Dive

DeepConsensus

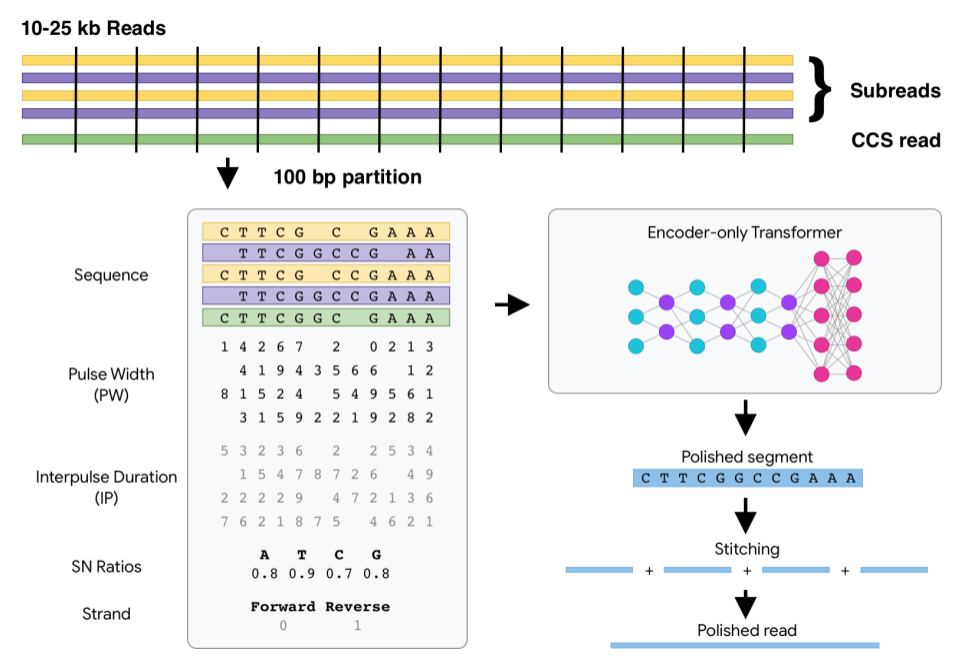

DeepConsensus uses gap-aware sequence transformers to correct errors in Pacific Biosciences (PacBio) Circular Consensus Sequencing (CCS) data.

This results in greater yield of high-quality reads. See yield metrics for results on three full SMRT Cells with different chemistries and read length distributions.

Usage

See the quick start for how to run DeepConsensus, along with guidance on how to shard and parallelize most effectively.

`ccs` settings matter

To get the most out of DeepConsensus, we highly recommend that you run ccs

with the parameters given in the quick start. This is

because ccs by default filters out reads below a predicted quality of 20,

which then cannot be rescued by DeepConsensus. The runtime of ccs is low

enough that it is definitely worth doing this extra step whenever you are using

DeepConsensus.

Compute setup

The recommended compute setup for DeepConsensus is to shard each SMRT Cell into at least 500 shards, each of which can run on a 16-CPU machine (or smaller). We find that having more than 16 CPUs available for each shard does not significantly improve runtime. See the runtime metrics page for more information.

Where does DeepConsensus fit into my pipeline?

After a PacBio sequencing run, DeepConsensus is meant to be run on the subreads to create new corrected reads in FASTQ format that can take the place of the CCS/HiFi reads for downstream analyses.

For variant-calling downstream

For context, we are the team that created and maintains both DeepConsensus and DeepVariant. For variant calling with DeepVariant, we tested different models and found that the best performance is with DeepVariant v1.5 using the normal pacbio model rather than the model trained on DeepConsensus v0.1 output. We plan to include DeepConsensus v1.2 outputs when training the next DeepVariant model, so if there is a DeepVariant version later than v1.5 when you read this, we recommend using that latest version.

For assembly downstream

We have confirmed that v1.2 outperforms v0.3 in terms of downstream assembly contiguity and accuracy. See the assembly metrics page for details.

How to cite

If you are using DeepConsensus in your work, please cite:

DeepConsensus improves the accuracy of sequences with a gap-aware sequence transformer

How DeepConsensus works

Watch How DeepConsensus Works for a quick overview.

See this notebook to inspect some example model inputs and outputs.

Installation

From pip package

If you're on a GPU machine:

pip install deepconsensus[gpu]==1.2.0

# To make sure the `deepconsensus` CLI works, set the PATH:

export PATH="/home/${USER}/.local/bin:${PATH}"If you're on a CPU machine:

pip install deepconsensus[cpu]==1.2.0

# To make sure the `deepconsensus` CLI works, set the PATH:

export PATH="/home/${USER}/.local/bin:${PATH}"From Docker image

For GPU:

sudo docker pull google/deepconsensus:1.2.0-gpuFor CPU:

sudo docker pull google/deepconsensus:1.2.0From source

git clone https://github.com/google/deepconsensus.git

cd deepconsensus

source install.shIf you have GPU, run source install-gpu.sh instead. Currently the only

difference is that the GPU version installs tensorflow-gpu instead of

intel-tensorflow.

(Optional) After source install.sh, if you want to run all unit tests, you can

do:

./run_all_tests.shDisclaimer

This is not an official Google product.

NOTE: the content of this research code repository (i) is not intended to be a medical device; and (ii) is not intended for clinical use of any kind, including but not limited to diagnosis or prognosis.

🚀 Quick Start

pip install deepconsensus[gpu]==1.2.0

# To make sure the `deepconsensus` CLI works, set the PATH:

export PATH="/home/${USER}/.local/bin:${PATH}"⚠️ Incomplete Data

Some information about this model is not available. Use with Caution - Verify details from the original source before relying on this data.

View Original Source →📝 Limitations & Considerations

- • Benchmark scores may vary based on evaluation methodology and hardware configuration.

- • VRAM requirements are estimates; actual usage depends on quantization and batch size.

- • FNI scores are relative rankings and may change as new models are added.

- ⚠ License Unknown: Verify licensing terms before commercial use.

Social Proof

AI Summary: Based on GitHub metadata. Not a recommendation.

🛡️ Model Transparency Report

Technical metadata sourced from upstream repositories.

🆔 Identity & Source

- id

- gh-model--google--deepconsensus

- slug

- google--deepconsensus

- source

- github

- author

- license

- BSD-3-Clause

- tags

- bioinformatics, deep-learning, long-read-sequencing, transformers, python

⚙️ Technical Specs

- architecture

- null

- params billions

- null

- context length

- null

- pipeline tag

- other

📊 Engagement & Metrics

- downloads

- 0

- stars

- 256

- forks

- 0

Data indexed from public sources. Updated daily.